Validated learning about customers is the measure of progress in a Lean Startup — not lines of working code or achieving product development milestones.

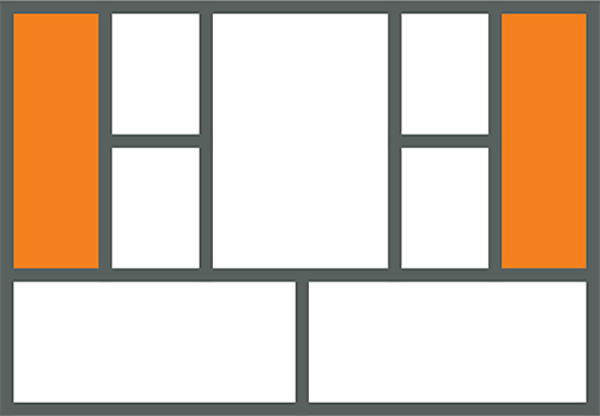

So let's take a look at where in the product development process we do this type of learning:

Where we learn about customers

While some learning happens during the requirements stage (driven by customer development activities), most of the learning happens only after we ship a release, with very little learning during development and QA.

Even though building a product is the purpose of a startup, product development actually gets in the way of learning about customers.

While we can’t eliminate development/QA or increase customer learning during those stages, we can shorten the cycle time from requirements to release to get to the learning parts faster. That is exactly what Continuous Deployment does.

I’ve written about my transition from a traditional development process to Continuous Deployment as a case-study on Eric Ries’ Startup Lessons Learned blog. The key concept in Continuous Deployment is switching from large batch sizes to small batch sizes. For me, that meant switching from releasing every two weeks to releasing every day. You can’t always build a feature in a day, but you get good at building features incrementally and deploying non-user-facing features first. The result was an immediate and noticeable improvement in cycle time, an acceleration in feedback, and, most importantly, more time for non-product development activities like learning.

But even with a streamlined product development flow, how do you ensure you’re actually building what customers want and not simply cranking out features faster?

Here are some rules I use:

How I build features

Rule 1: Don’t be a feature pusher

If you’ve followed a customer discovery process, identified a problem worth solving, and, as a result, defined a minimum viable product, don’t push any new features until you’ve validated the MVP. This doesn’t mean you stop development, but rather most of your time should be spent measuring and improving existing features and not chasing after new shiny features.

From experience, I know this can be a hard rule to enforce. Many of us still measure progress in lines of working code and believe our problems with traction are rooted in not finding the right killer features. The next rule helps with that.

Rule 2: Constrain the features pipeline

A good practice for ensuring the 80/20 rule is constraining the features pipeline, which is a common practice from Agile, but with the addition of a validated learning state for every feature.

Ideally, a new feature must be pulled by more than one customer for it to appear in the backlog. It is okay to experiment with some new features that come straight out of your head, but I still try and find ways to test them with a few customers first (in a demo, or product presentation) before committing them to the backlog.

Passion around a vision is good.

Passion around building what customers want is better.

The number of features in-progress is constrained by the number of developers, and so is the number of features waiting for validation. This ensures that you cannot work on a new feature until a previously deployed feature has been validated.

Rule 3: Close the loop with qualitative metrics first

Because quantitative metrics can take some time to collect, I prefer to validate all features qualitatively first. The feature is nixed immediately if I don’t get a strong initial signal. Otherwise, it lives until the quantitative data is in.

For qualitative feedback, I’ll contact the customer (or customers) that requested the feature once it goes live and ask them for feedback. Following Eric’s advice on focussing on macro effects, it’s not enough to test the “coolness” factor of the feature, but rather test if this feature solves the customer problem and, even more importantly, whether it can make or keep the sale. I also tend to highlight new features in face-to-face usability tests and periodic “release-update” newsletters, so they get more attention.

On the quantitative side, I still use a combination of KISSmetrics and Mixpanel to collect usage data on the feature.

Counterintuitive?

Some of these ideas, such as using small batch sizes, constraining the features pipeline, and forcing a stop if we aren’t learning, seem counterintuitive at first. Most of us have been trained to specialize in departments like development, QA, and marketing — turning ourselves into efficient large batch processing machines running at full capacity.

This mode of working doesn’t work even in the manufacturing world, where you have more predictive (less variable) and repetitive tasks. Building software, on the other hand, is highly variable, and problems with large batches are further exacerbated. For the technically inclined into why this is so, I highly recommend Donald Reinersten’s book “The Principles of Product Development Flow.”

In a Lean Startup, Product Development isn’t just about the code anymore. Everyone is responsible for learning about customers.

Update: If you liked this content, consider checking out my book: Running Lean, which dedicates 50 pages alone on this topic.

You can learn more here: Get Running Lean.