First, what is an actionable metric?

An actionable metric ties specific and repeatable actions to observed results.

The opposite of actionable metrics is vanity metrics (like web hits or several downloads), which only document the product's current state but offer no insight into how we got here or what to do next.

In my last post, I highlighted the importance of thinking about Pivots versus Optimizations before product/market fit.

Pivots are characterized by maximizing learning, while Optimizations are characterized by maximizing efficiency.

This distinction carries over to metrics too. As we’ll see, some metrics matter more than others depending on the stage of the company, but more importantly, how these metrics are measured makes them actionable versus not. I’ll share my three rules for actionable metrics, derived from Lean Startup principles, and specifically focus on what metrics I measure and how I measure them.

Rule 1: Measure the “Right” Macro

Eric Ries recommends focusing on the macro effect of an experiment (such as sign-ups versus button clicks), but it’s just as important to focus on the right macro. For example, spending a ton of effort to drive sign-ups for a product with low retention is a waste.

Identify Key Metrics

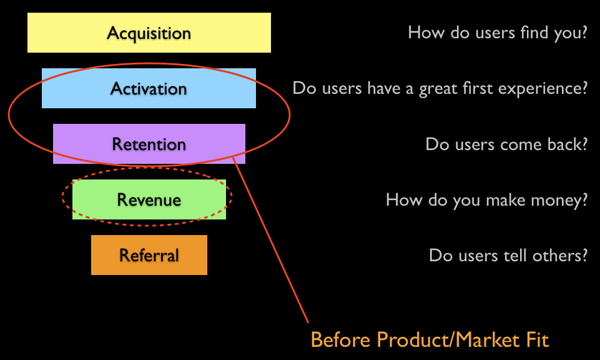

The good news is that there are only a handful of macro metrics that matter, and Dave McClure has distilled them down to 5 key metrics. Of the 5, only two matter before Product/Market Fit — Activation and Retention.

Before Product/Market Fit, the goal is validating that you have built something people want. You don’t need lots of traffic sources to support learning and people don’t usually refer a product unless they have used and like the service. So both Acquisition and Referral can be tabled for now. What does correlate with building something people want is providing a great first experience (Activation) and, most important of all, that they come back (Retention).

Note: Some of you might have noticed that I swapped Revenue with Referral from Dave’s version. This is because I believe in charging from day 1, which more naturally aligns (but does not replace) Revenue with Retention.

Map Metrics to Actions

The next step is to map specific actions in your product to Activation and Retention.

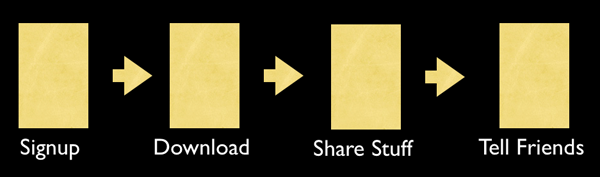

Activation Actions

Activation actions typically start with your sign-up process and need to end with the key activities that define your product’s unique value proposition.

Note: The “Tell Friends” here is used to publicize shared galleries, and I don’t count it towards “Referral.” I view “Referral” actions as more deliberate endorsements of the product, such as through an affiliate program.

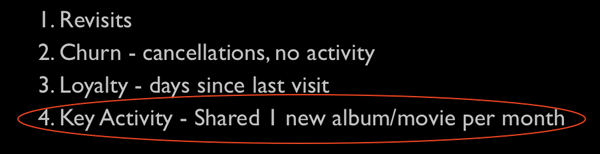

Retention Actions

There are several ways to define retention, and Andrew Chen even distinguishes retention and engagement. I prefer to tie my retention action to the key activity that maps to the UVP.

Rule 2: Create Simple Reports

Reports that are hard to understand won’t get used. Similarly, reports spread across pages and pages of numbers (ahem, Google Analytics) won’t be actionable. I am a big fan of simple 1-page reports, and funnels are a great format for that.

Funnel Reports — The Good, The Bad, and The Ugly

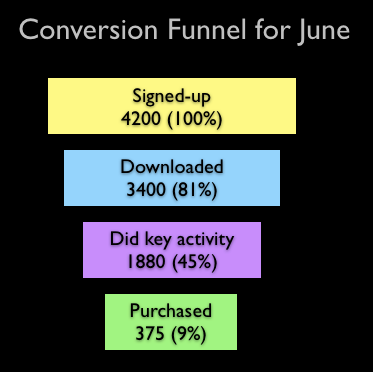

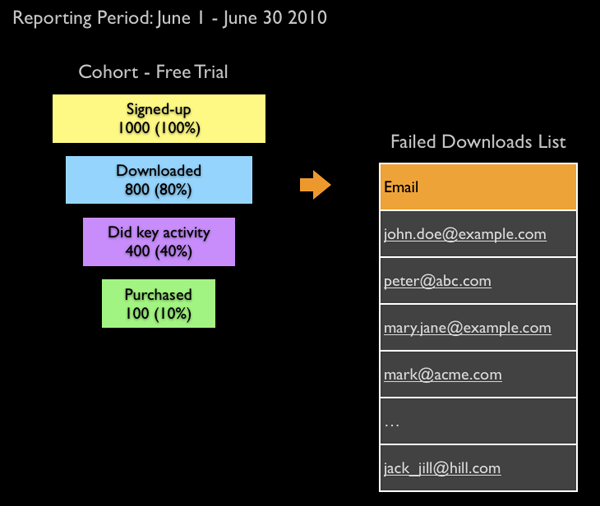

Funnels are a great way to summarize key metrics: They are simple, visual, and map well to the Activation flow (and Dave’s AARRR startup metrics in general). Here is an example of a funnel for a service that is offered under a 14-day free trial.

But funnel analysis, as implemented by analytics tools today, have several shortcomings:

Tracking long life-cycle events

For one, it is hard to track long lifecycle events accurately. Almost all funnel analysis tools use a reporting period where events generated in that period are aggregated across all users. This skews numbers at the boundaries of the funnel. But more importantly, because you are constantly changing the product, it is impossible to tie back observed results to specific actions you might have taken a month ago.

Tracking split tests

A more serious manifestation of the same problem is tracking split-tests for a macro metric like Revenue which also has a long life cycle. An example of an experiment I am currently running is studying the long-term consequences of offering a Freemium plan alongside a Free Trial plan. I believe a properly modeled Freemium plan should behave like a Free Trial. The only difference is that Free Trials have a set expiration, while Freemium users outgrow the service after some time X. I can reasonably guess that I will get more sign-ups with Freemium, but the bigger question is whether that will also translate to more Retention/Revenue. If so, what is the average time to conversion (period X)? I can’t answer these types of questions with the current funnel tools.

Measuring Retention

And finally, funnel tools don’t provide a way to track retention, which needs to track user activity over long periods.

Funnels Alone Are Not Enough. Say Hello to the Cohort.

So while funnels are a great visualization tool, funnels alone are not enough. Today's analytics tools work well for micro-optimization experiments (such as landing page conversion) but fall short for macro-pivot experiments.

The answer is to couple funnels with cohorts.

Cohort Analysis is very popular in medicine, where it is used to study the long-term effects of drugs and vaccines:

A cohort is a group of people who share a common characteristic or experience within a defined period (e.g., are born, are exposed to a drug or a vaccine, etc.). Thus a group of people who were born on a day or in a particular period, say 1948, form a birth cohort. The comparison group may be the general population from which the cohort is drawn, or it may be another cohort of persons thought to have had little or no exposure to the substance under investigation, but otherwise similar. Alternatively, subgroups within the cohort may be compared with each other.

Source: Wikipedia

We can apply the same concept of the cohort or group to users and track their usage lifecycle over time. For our purposes, a cohort is any property attributed to a user we wish to track. The most common cohort used is “join date,” but as we’ll see, this could just as easily be the user’s “plan type,” “operating system,” ‘sex,” etc.

Let's see how to apply cohorts to overcome the shortcomings with the funnels we covered above.

Tracking Long LifeCycle Events

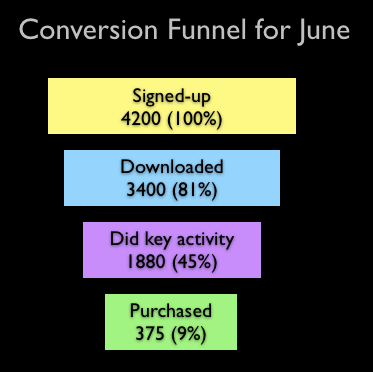

The first report I recommend implementing is a “Weekly Cohort Report by Join Date.” This report functions like a canary in the coal mine and is a great alerting tool for picking up on actions that had overall positive or negative impacts.

You group users by the week in the year they signed up and track all their events over time. This report was generated from the same data used in the funnel above (which I’ve shown again for easy comparison). The key difference from the funnel report is that other than the join date, all other user events don’t need to occur within the reporting period. You’ll notice immediately that many conversion numbers (especially Purchased) are quite different because a cohort report doesn’t suffer from boundary issues with simple funnel reports.

More importantly, though, the weekly cohort report more visibly highlights significant changes in the metrics, which can then be tied back to specific activities done in a particular week.

Tracking Split Tests

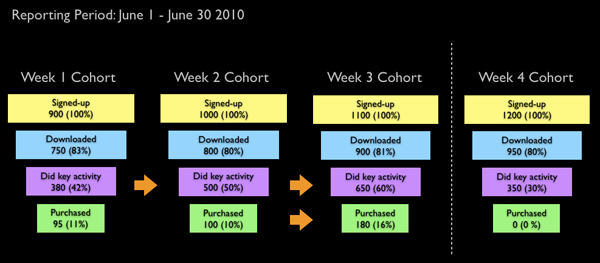

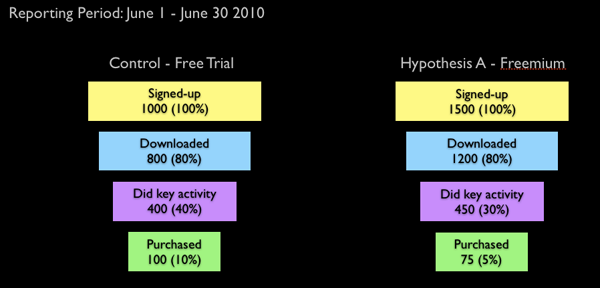

Besides reactive funnel monitoring, cohorts can also be used to measure split-test experiments proactively. Here is a report that uses the “plan type” as a cohort for the “Freemium” versus “Free Trial” experiment I described above.

Disclaimer: My Freemium versus Free Trial experiment is still underway, and these results are made up.

You can see that while activations are higher with the Freemium plan, Revenue (so far) is lower. That may change over time, and it’s important to know the average time to conversion so the Freemium plan can be modeled accordingly.

You can create a cohort out of any user property you collect and run reports to uncover questions like:

1. Do mac users convert better than windows users?

2. Do certain search keywords convert better than others?

3. Do female users convert better than male users?

etc.

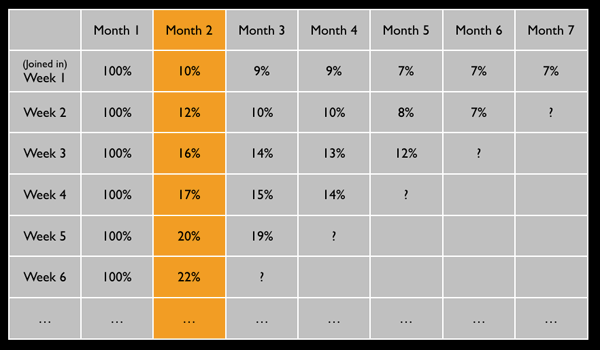

Tracking Retention

Now for the most important metric before Product/Market Fit — Retention. This report is also generated using a weekly cohort by join date, but instead of tracking conversion, it tracks the key activity over time.

We only track “Activated” users, which is why all the Month 1 retention values are 100%. A Retention report can tell you if you are moving in the right direction towards building a product people want or simply spinning cycles.

Rule 3: Metrics are People too

Metrics can only tell you what your users did. They can’t tell you why. A key requirement for making metrics actionable is that you should be able to tie them to actual people. This is useful for locating your most active users and, more importantly, troubleshooting when things go wrong.

This last part is particularly important before product/market fit when you don’t have huge numbers of users and need to rely more on qualitative versus quantitative validation.

Here is an example where I can extract the list of people who failed to complete my funnel's download step. Armed with this list, I don’t have to guess what could have gone wrong. I can pick up the phone or send out an email and ask the user.

How do I Create these Reports?

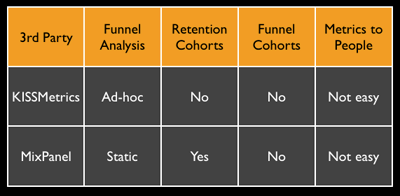

I alluded before that most analytics tools are better suited for micro-optimization experiments versus macro-pivot experiments. This makes sense because optimization is (typically) a post-product/market fit activity and where the money is at.

I have been an early user of both KISSmetrics and Mixpanel, and while both tools are good at Funnels, they fall short in Cohort Analysis. Mixpanel currently supports retention cohort reports but not funnel cohorts, and I know Hiten from KISSmetrics is thinking hard about cohorts. So hopefully, we’ll see something soon there.

That said, I was struggling with tracking my Freemium versus Free Trial split test. Hence, as an experiment, I spent an afternoon building my own homegrown cohort analysis tool based on the conceptual People/Events/Properties model I learned from using KISSmetrics. All the reports you see here were generated using that.